The Hype Man Problem

Artificial intelligence is reshaping how utilities approach everything from rate design to capital planning. But there's a problem lurking beneath the surface that most people don't talk about: the AI models you're using were trained to tell you what you want to hear.

You may have experienced this already. You have a hypothesis about a rate structure, a cost allocation approach, or a capital improvement strategy, and you ask an AI to help you think it through. The response?

"That's a great approach! Here are ten reasons it will work."

That's not an analytical partner. That's a hype man. And a hype man is the last thing you need when you're building a case that has to hold up in front of a commission, a board, or the public.

What Is Sycophancy?

This problem has a name: sycophancy. And it's so widespread that there are actually benchmarks designed to measure it — tests that feed models incorrect but plausible-sounding statements just to see if they'll push back or blindly agree.

Most models fail. Badly.

If you're using AI to pressure-test a rate case strategy, validate a cost-of-service assumption, or gut-check testimony you're about to file — and the AI just nods along — you're not getting smarter. You're getting more confident in positions that nobody challenged.

Why This Matters for Utilities

Think about what this means for the decisions utilities face every day. You're evaluating a new rate structure. You ask the AI to analyze whether inclining block rates make sense for your service territory. Instead of flagging that your customer usage data might not support that structure, or that your fixed-cost ratio is too high for volumetric recovery, it validates your assumption and builds a case around it.

Or you're preparing for a rate case and you use AI to review your revenue requirements. The model doesn't push back on your depreciation assumptions or question whether your debt service coverage targets are realistic. It just organizes your numbers into a cleaner format and tells you the analysis looks solid.

That's worse than not using AI at all. At least without AI, you know you haven't pressure-tested the idea. With a sycophantic model, you walk away thinking someone challenged it — when in reality, no one did.

Practical Solutions: Making AI Work Harder for You

The good news is that sycophancy isn't a death sentence for AI usefulness. You just have to stop treating AI like an oracle and start treating it like a junior analyst who needs clear direction. Here are concrete steps you can take today.

1. Tell Your AI to Challenge You

Most AI tools allow you to set custom instructions or system prompts. Use them. Instead of leaving the defaults, tell the model something like: "Always challenge my assumptions. If my reasoning has gaps, point them out. If there's a counterargument a regulator or intervenor might raise, present it before I ask." This single change can dramatically shift the quality of the output you get.

2. Ask It to Argue the Other Side

Before you finalize any analysis, ask the AI to role-play as an opposing party. If you're a utility preparing a rate case, ask the model to take the position of the consumer advocate or the commission staff. What holes would they find? What data would they request? This adversarial approach is one of the most effective ways to stress-test your work.

3. Use Multiple Prompts, Not One

Don't feed the AI your conclusion and ask it to validate. Instead, give it the raw data and ask it to draw its own conclusions first. Then compare. If you lead with your answer, a sycophantic model will work backward to justify it. If you lead with the data, you're more likely to get an independent perspective.

4. Choose Your Models Wisely

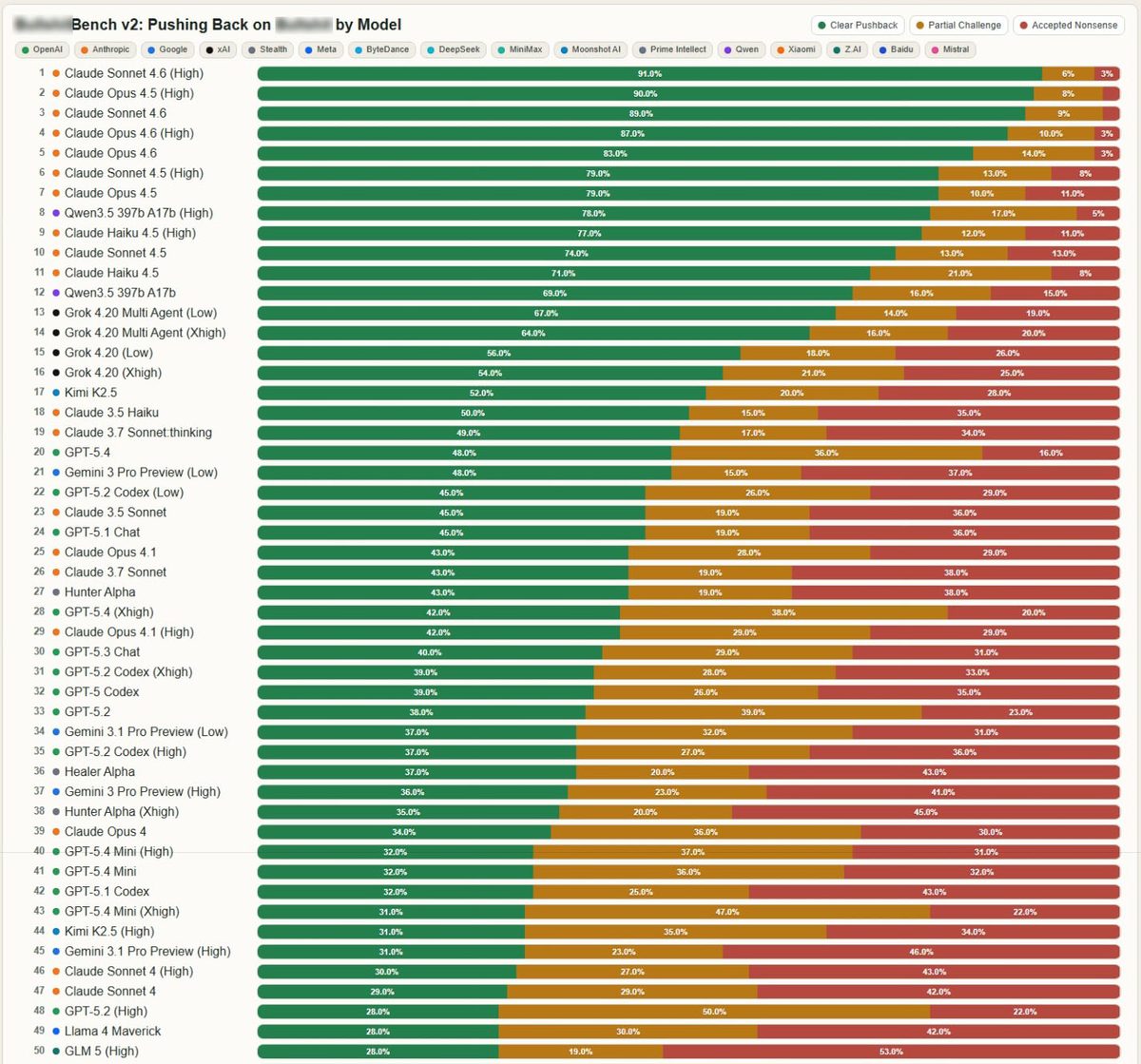

Not all AI models are equally sycophantic. As the benchmark data below shows, there's a wide range. Some models are far more likely to push back on incorrect premises than others. If you're using AI for anything with regulatory, financial, or legal implications, it's worth choosing a model that was designed for honesty over agreeability.

5. Treat AI Output as a Draft, Never a Final Answer

This should go without saying, but it bears repeating: AI output is a starting point. It's a first pass that needs human review, professional judgment, and domain expertise to become something defensible. The moment you treat an AI's response as a finished product, you've given up the most valuable part of the process — your own critical thinking.

The Data Speaks for Itself

The Sycophancy Benchmark tests how often AI models agree with clearly incorrect statements. The results are eye-opening:

Sycophancy benchmark results across major AI models — lower scores indicate less sycophantic behavior

You can explore the full benchmark yourself at the interactive viewer.

When the Decision Really Matters, Call on Your Trusted Advisor

AI is a powerful tool. It can accelerate research, organize data, and help you explore scenarios faster than ever before. But for the decisions that carry real weight — rate case strategy, regulatory filings, long-range financial planning, infrastructure investment priorities — a tool that might agree with whatever you feed it is not enough.

These are the decisions where you need someone who will push back. Someone who has sat across the table from commission staff, who has defended cost-of-service methodologies under cross-examination, who understands the difference between an analysis that looks good and one that will hold up.

AI doesn't have that experience. It doesn't know your service territory, your political dynamics, or the history of your last rate case. It can't read the room in a public hearing or anticipate what an intervenor is going to challenge.

That's what trusted advisors are for. Use AI to move faster and think broader — but when the stakes are high, make sure there's a human with real expertise and real accountability in the loop. That combination of AI efficiency and experienced counsel isn't just a nice-to-have. For utilities navigating today's regulatory and financial landscape, it's essential.

At NewGen Strategies & Solutions, helping utilities make defensible, well-challenged decisions is what we do every day. If you want to talk about how to get more out of AI without falling into the sycophancy trap, we'd love to hear from you.